The analysis of variance test for the regression, summarised by the ratio F, shows that the regression itself was statistically highly significant. The r square value tells us that about 42% of the total variation about the Y mean is explained by the regression line. % increase in weight = 167.87 - 0.864 * birth weight. Select the column marked "% Increase" when prompted for the response (Y) variable and then select "Birth weight" when prompted for the predictor (x) variable.Įquation: % Increase = -0.86433 Birth Weight +167.870079ĩ5% CI for population value of slope = -1.223125 to -0.505535Ĭorrelation coefficient (r) = -0.668236 (r²= 0.446539)ĩ5% CI for r (Fisher's z transformed) = -0.824754 to -0.416618Ĭorrelation coefficient is significantly different from zeroįrom this analysis we have gained the equation for a straight line forced through our data i.e. Then select Simple Linear and Correlation from the Regression and Correlation section of the analysis menu. Alternatively, open the test workbook using the file open function of the file menu. To analyse these data in StatsDirect you must first enter them into two columns in the workbook appropriately labelled. The following data represent birth weights (oz) of babies and their percentage increase between 70 and 100 days after birth.

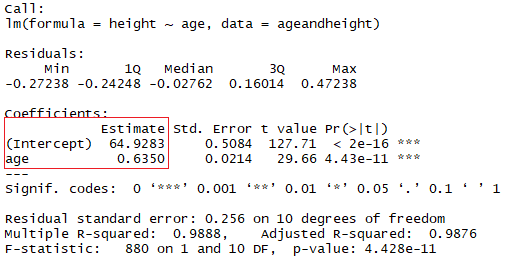

Test workbook (Regression worksheet: Birth Weight, % Increase). Note also that the multiple regression option will also enable you to estimate a regression without an intercept i.e. If you require a weighted linear regression then please use the multiple linear regression function in StatsDirect it will allow you to use just one predictor variable i.e. These belts represent the reliability of the regression estimate, the tighter the belt the more reliable the estimate ( Gardner and Altman, 1989). The estimated regression line may be plotted and belts representing the standard error and confidence interval for the population value of the slope can be displayed. at least one variable must follow a normal distribution.no association) is evaluated using a modified t test ( Armitage and Berry, 1994 Altman, 1991). Thus 1-r² = s²xY / s²Y.Ĭonfidence limits are constructed for r using Fisher's z transformation. 1-r² is the proportion that is not explained by the regression. R² is the proportion of the total variance (s²) of Y that can be explained by the linear regression of Y on x. Pearson's product moment correlation coefficient (r) is given as a measure of linear association between the two variables: If the pattern of residuals changes along the regression line then consider using rank methods or linear regression after an appropriate transformation of your data. it is the distance of the point from the fitted regression line. Y is linearly related to x or a transformation of xĭeviations from the regression line (residuals) follow a normal distributionĭeviations from the regression line (residuals) have uniform varianceĪ residual for a Y point is the difference between the observed and fitted value for that point, i.e. This differentiates to the following formulae for the slope (b) and the Y intercept (a) of the line: Regression parameters for a straight line model (Y = a + bx) are calculated by the least squares method (minimisation of the sum of squares of deviations from a straight line). This function provides simple linear regression and Pearson's correlation. Menu location: Analysis_Regression and Correlation_Simple Linear and Correlation.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed